Interactive Memorial Conversations Guide for Grieving with AI

Welcome to the uncanny frontier—where grief collides with code, and remembrance slips into a digital echo chamber. The phrase “interactive memorial conversations guide” isn’t just a keyword; it’s a challenge to centuries of mourning rituals, a litmus test for how we handle the rawness of loss in an age of artificial intelligence. Imagine speaking with a digital ghost—your mother’s familiar cadence, your partner’s corny jokes, your best friend’s late-night advice—alive again, replicated by neural networks, responsive, almost sentient. It's not sci-fi, it’s happening—right now. As of 2024, over 50 companies are sculpting the digital afterlife, trading static headstones for AI-powered chatbots and avatars that let us “converse” with the dead. Yet with this revolution comes a storm of ethical, emotional, and technical landmines: privacy breaches, emotional dependencies, uncanny valley moments, and existential questions about who owns our stories once we’re gone. This guide cuts through the hype, dissecting the emotional psychology, the tech, the risks, and the very real voices—living and digital—shaping the way we grieve, remember, and maybe, just maybe, heal. If you think mourning is personal, static, or sacred, brace yourself. The digital afterlife is here, and it’s talking back.

Why we crave connection after loss: The psychology of digital memorial conversations

The roots of remembrance: From oral histories to AI avatars

Remembrance isn’t new. Since humanity first gathered around fires, we’ve conjured the dead through stories, relics, and rituals. In every culture, oral histories kept ancestors “alive,” their voices echoing through generations. Fast-forward to the 21st century, and we’ve moved from story circles to photo albums, then to social media timelines. The leap to AI avatars—digital recreations that mimic our loved ones’ speech and personality—might seem radical, but it’s just the latest iteration of a primal urge: to keep the dead present, to stave off oblivion.

Definition list:

Traditional storytelling methods where elders verbally pass memories and wisdom to younger generations; the original “interactive memorials,” albeit analog.

Digital recreations of individuals, designed to simulate conversation and emotional presence using machine learning and rich personal data—photos, videos, messages, and, increasingly, voice and video.

The online legacy and continued presence of an individual after death, including social media, digital assets, and now, AI-driven memorials that “speak” in the deceased’s voice.

According to Memory Studies Review, 2024, these methods serve a fundamental psychological need: continuity and connection, even beyond physical loss.

Grief in the digital age: New rituals, new risks

Grief is timeless, but our rituals aren’t. Social media memorial pages, virtual wakes, and now interactive AI “griefbots” have exploded since the COVID-19 pandemic kept mourners apart. These digital rituals break barriers of geography and time—but they also introduce new risks: mismanaged data, prolonged grief cycles, and even identity theft. As of 2024, 61% of global consumers worry about the online legacy of the deceased, fearing their loved ones’ digital remains may be misused or hacked (MiniMe Insights, 2024).

- Social media memorials offer ongoing spaces for mourning, but blur boundaries between public and private grief.

- AI-powered avatars simulate conversations, providing comfort, but sometimes deepen dependency or prevent closure.

- Digital legacies—unmanaged—can lead to post-mortem identity theft or exploitation.

"Online memorials have created a new space for collective grieving, but they also raise unprecedented ethical and emotional challenges that societies are only beginning to confront." — Dr. Ruth Miller, Digital Culture Researcher, Psychology Today, 2024

Emotional needs unmet by traditional memorials

Traditional memorials—headstones, funerals, eulogies—offer closure, but their silence can sting. Once the crowd disperses, what’s left is an aching absence. Interactive memorial conversations step into this void, promising something static ceremonies cannot: ongoing dialogue, “living” advice, and comfort on demand. As digital memorials proliferate, they’re not just placeholders for loss—they’re attempts to fulfill emotional needs left unanswered by tradition.

Recent research from Psychology Today, 2024 highlights that digital memorials help sustain “continuing bonds,” enabling mourners to engage in therapeutic self-talk and maintain connections that support healing and personal growth.

The promise and peril of AI-powered comfort

The promise is seductive: speak to your late father, hear your partner’s advice, or seek forgiveness from a digital approximation. AI memorial conversations can accelerate healing, offer closure, and even spark joy by reliving happy memories. But the peril is real. Overreliance can blur reality, stunting the grieving process or fostering emotional dependency. The line between comfort and crutch is razor-thin.

According to SAGE Journals, 2024, collective online mourning reduces isolation—but some users struggle to transition from digital comfort to real-world acceptance, prolonging grief.

"Griefbots are not a replacement for human support, but when used thoughtfully, they can provide significant comfort to those in acute pain." — Dr. Lena Patel, Clinical Psychologist, ACM CHI, 2024

In sum, the psychology of interactive memorial conversations is a double-edged sword: the technology can amplify healing or entrench loss, depending on how and why we use it.

How interactive memorial conversations actually work: Tech, truth, and the uncanny

The anatomy of an AI memorial: Data, design, dialogue

At the heart of every interactive memorial is a data mosaic: text messages, photos, voice notes, and even video clips. Advanced AI models—think large language models trained on a person’s digital footprint—stitch these fragments into a conversational agent. The process is anything but magic; it’s algorithmic, deliberate, and often collaborative between the AI provider and the bereaved.

| Component | Function | Example Use |

|---|---|---|

| Personal Data Archive | Source material for AI training | Emails, social posts, voice memos, family videos |

| Language Model | Generates text responses, mimicking speech style | OpenAI GPT, custom LLMs, specialized memorial chat engines |

| Voice Synthesis | Recreates vocal patterns and tone | Deep-learning voice cloning from 30-60 seconds of audio |

| Avatar Engine | Animates visual representation (optional) | 2D/3D avatars, sometimes photorealistic, sometimes stylized |

| Privacy Layer | Protects user data, manages consent | GDPR-compliant storage, user-controlled access |

Table 1: Key building blocks of AI-powered interactive memorial conversations.

Source: Original analysis based on IEEE Spectrum, 2023, Satori News, 2024

What makes a digital conversation feel ‘real’?

It’s not just about accuracy; it’s about affect. The realism of digital memorial conversations depends on how deeply the AI can mimic the quirks, rhythms, and emotional nuances of the departed. The more data you feed it, the richer (and eerier) the simulation.

- Data Depth: More personal data—especially voice notes and long-form messages—yields more authentic responses.

- Conversational Context: Advanced memorial AIs remember prior chats and adapt to your emotional state.

- Voice and Visuals: Synchronized voice synthesis and expressive avatars deepen immersion.

- Emotional Intelligence: The best memorial bots can detect sentiment, empathize, and tailor their responses.

- Boundary Awareness: Effective AIs know when to offer comfort and when to suggest seeking human support.

Limits of today’s technology—and where it fails

Despite the hype, current memorial AIs are far from omniscient. They can misinterpret context, offer generic platitudes, or, in rare but troubling cases, “hallucinate” inappropriate responses. The uncanny valley—where digital recreations are almost, but not quite, lifelike—can evoke discomfort or even distress.

- Data gaps: If the source material is sparse, conversations feel generic or stilted.

- Context blindness: AI struggles with complex, ambiguous, or highly emotional scenarios.

- Privacy risks: Weak security can expose private memories to exploitation or leaks.

- Emotional overreach: Bots can sometimes give advice or comfort inappropriately, lacking true understanding.

ACM CHI research (2024) points out that while most users find value in the comfort, 38% express concerns about the authenticity and emotional impact of simulated interactions.

A behind-the-scenes look: Building a lifelike memorial chat

Creating an interactive memorial isn’t plug-and-play. It’s a meticulous process, often requiring collaboration between families, technologists, and sometimes therapists.

First, users gather digital assets: text, voice, video. Then, AI professionals process and “train” the model, customizing it with stories, inside jokes, and personal quirks. Finally, the outcome is tested and fine-tuned, often in several iterations.

| Build Phase | Key Steps | Considerations |

|---|---|---|

| Data Gathering | Collect messages, photos, audio, video | Respect privacy, obtain permissions |

| Model Training | AI processes data, learns speech and style | Quality/quantity of data affects outcome |

| Avatar Creation | Optional—choose visuals, record voice samples | Decide between stylized or photorealistic representation |

| Beta Testing | Family/friends test and provide feedback | Emotional impact, accuracy, boundaries |

| Deployment | Launch for ongoing interactive use | Security, consent, user controls |

Table 2: Typical workflow of building a digital memorial conversation.

Source: Original analysis based on IEEE Spectrum, 2023 and Satori News, 2024

The ethics no one wants to talk about: Memory, consent, and digital ghosts

Who owns your story when you’re gone?

The digital afterlife is a legal and ethical quagmire. Your stories, your data—who controls them after death? Is your digital ghost a living testament or a data point for corporate profit? With no global legal framework, ownership is unclear, and disputes between families, platforms, and AI providers are inevitable.

Definition list:

The sum of your online presence, including social profiles, emails, and now, AI avatars, left behind after death.

The right to determine, in advance, how your digital remains are used, memorialized, or erased.

A designated individual or entity responsible for managing your digital assets, including memorial platforms and interactive avatars, after your death.

“Ownership of digital remains is a legal gray area. Without explicit consent, families and companies are left to negotiate the boundaries of memory and privacy.” — Prof. Charles Lin, Digital Ethics Scholar, IEEE Spectrum, 2023

Consent and the afterlife: What would they have wanted?

Consent isn’t a checkbox; it’s a spectrum. Did your loved one explicitly want to “live on” as an AI? Or did they expect their stories to rest with them? Digital memorials force families to grapple with these questions—often too late.

- Many platforms now offer pre-death consent tools, allowing users to specify their wishes for posthumous digital presence.

- Families sometimes create avatars without clear consent, leading to ethical and emotional conflicts.

- Jurisdictions differ on digital inheritance, leaving gaps in protection and clarity.

Even when intentions are good, the risk of violating dignity or privacy lingers.

Deepfakes of the dead: Dignity or danger?

Deepfake technology powers many AI memorials, recreating faces and voices with shocking fidelity. But at what cost? Critics warn that resurrecting the dead blurs lines between tribute and exploitation, between healing and harm.

| Deepfake Use Case | Potential Benefits | Risk Factors |

|---|---|---|

| Memorial Avatars | Emotional comfort, closure, preservation | Misuse, unauthorized creation |

| Scheduled Video Messages | Family connection, legacy sharing | Manipulation, fraud risk |

| Historical Reenactments | Education, cultural preservation | Consent ambiguity, narrative distortion |

| Activism/Commemoration | Collective mourning (#BlackLivesMatter) | Trauma, retraumatization |

Table 3: Deepfake technology in digital memorials—opportunities vs. hazards.

Source: Original analysis based on Satori News, 2024

Ethical frameworks: What experts actually say

Ethicists stress a consent-first, dignity-centric approach. Many recommend establishing clear guidelines: explicit opt-ins, independent oversight, and robust privacy protections. According to IEEE Spectrum, 2023, most experts agree that interactive memorials should never replace human support—but can augment it when deployed transparently and ethically.

“Interactive memorials hold power to comfort, but without careful boundaries, they risk commodifying memory and undermining genuine grieving.” — Dr. Ellen Choi, Ethics Consultant, IEEE Spectrum, 2023

In practice, transparency, family dialogue, and user control are crucial for keeping the balance between remembrance and respect.

Case studies: Real people, real conversations with the digital departed

Speaking to a parent: Grief, closure, or something else?

Imagine this: after losing her father, Maria uploads years of voice memos, texts, and family videos to build a digital memorial on a leading platform. The result? A “conversation” that sounds eerily authentic, offering her comfort—but also unsettling reminders of what’s lost. According to Psychology Today, 2024, such experiences can:

- Provide emotional relief in acute grief, mimicking continued presence.

- Raise questions about authenticity, especially when the AI falters or improvises.

- Lead to dependency if the mourner relies too heavily on digital interactions.

- Help with closure, but sometimes prolong unresolved issues if not managed carefully.

Digital reunions: When friends reconnect beyond the grave

Friends, not just family, are turning to interactive memorials to keep bonds alive. In one case, a group of high school friends built an AI version of their late bandmate, reliving old jokes and shared memories. The experience was bittersweet: laughter mixed with an uncanny sense of loss, as the bot occasionally “invented” stories it never lived.

“It was comforting to hear his jokes again, but sometimes it reminded us of what can’t be replaced. The AI filled a void, but also made us confront the limits of memory.” — Interviewee, Memory Studies Review, 2024

Such reunions often reinforce the continuing bonds theory, helping friends collectively mourn and heal—but only when those boundaries are understood and respected.

The therapist’s dilemma: Does AI help or hinder healing?

Mental health professionals are divided. Some see interactive memorial conversations as a supplementary tool—a way to process emotions or rehearse tough dialogues. Others warn that AI memorials can disrupt natural grief trajectories, especially if used to avoid painful acceptance.

| Perspective | Key Benefits | Cautions |

|---|---|---|

| Supportive | Comfort, self-reflection, closure | Requires balanced use, risk of avoidance |

| Critical | May prolong denial, create dependency | Should not replace real-world relationships |

| Nuanced | Useful for some, harmful for others | Case-by-case, guided by mental health professionals |

Table 4: Therapist perspectives on interactive memorial conversations.

Source: Original analysis based on ACM CHI, 2024

Ultimately, the therapist’s role is to help mourners distinguish between healthy remembrance and unhealthy attachment to digital ghosts.

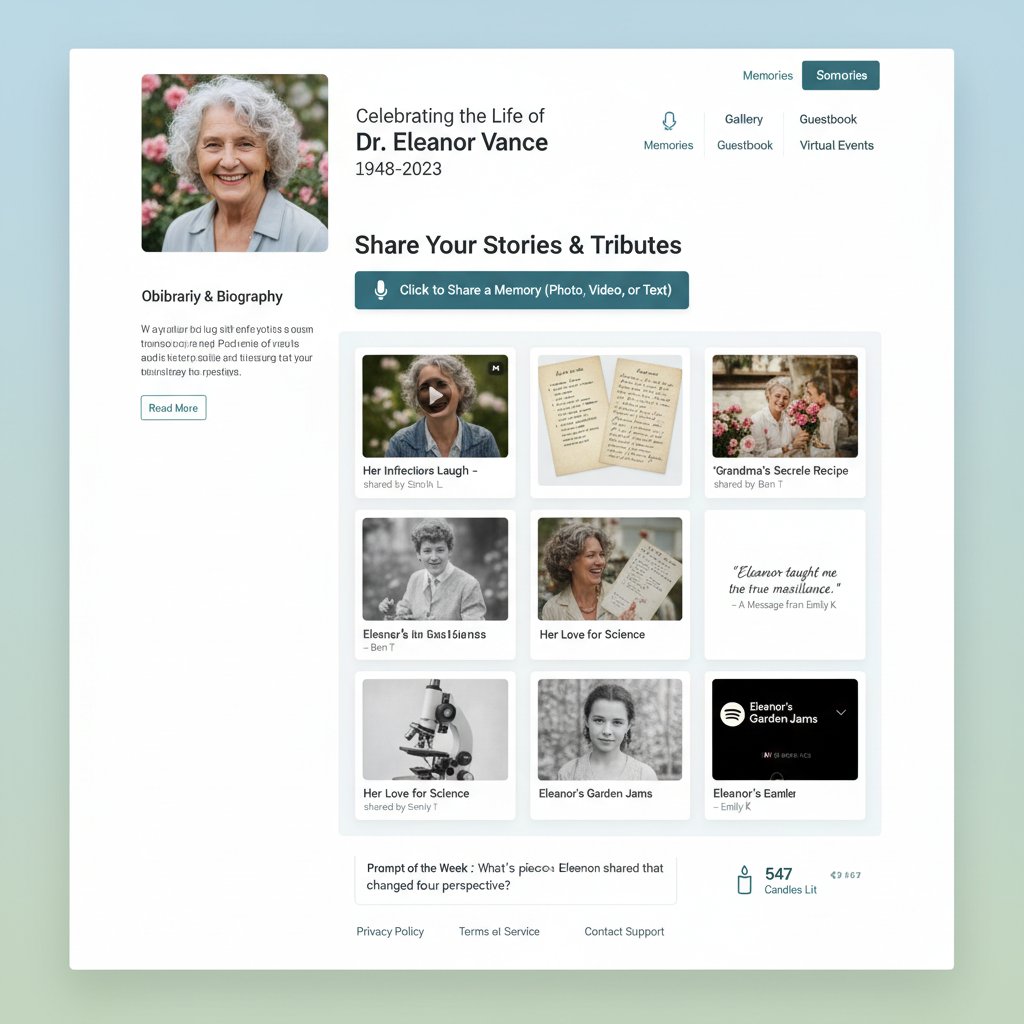

Step-by-step: Creating your own interactive memorial conversation

What you need before you begin

Preparation is everything. Before starting, gather digital assets and clarify your intentions.

- Collect all relevant digital materials: text messages, emails, photos, audio recordings, and videos.

- Obtain explicit consent from the individual (if possible) or family members.

- Research reputable platforms with robust privacy policies.

- Set emotional boundaries—know your purpose (comfort, closure, remembrance).

- Prepare for an emotional journey—interacting with a digital “ghost” can trigger unexpected feelings.

How to choose the right platform (without getting scammed)

Not all memorial platforms are created equal. Some offer privacy and authenticity; others prey on grief.

- Look for transparent privacy policies and user-controlled data management.

- Check for third-party security certifications and GDPR compliance.

- Seek out platforms with proven track records and positive testimonials.

- Avoid services that make outlandish claims (“immortality”) or refuse to clarify data usage.

- Consider whether you want just text, or also voice and avatar features.

Uploading, customizing, and fine-tuning the experience

Once you’ve chosen a platform:

- Upload your collected materials—photos, texts, audio, and video.

- Work with the platform’s onboarding tools to personalize the avatar (voice, image, key phrases).

- Test the avatar’s responses on low-emotion topics first.

- Adjust settings for privacy, conversational boundaries, and data access.

- Solicit feedback from trusted friends or family, and tweak as needed.

Fine-tuning is iterative—don’t expect perfection on the first try. The most rewarding experiences come from patience and honest feedback.

Testing and sharing: What to expect the first time

The first conversation with a digital memorial can be jarring—or cathartic. Expect technical glitches, emotional surges, and moments of eerie familiarity. It’s best to approach with an open mind and supportive company.

After testing, decide how (or if) to share the memorial with others. Some choose to keep the interaction private; others invite family and friends to join in collective remembrance.

Red flags and hidden benefits: What most guides won’t tell you

Warning signs you’re not ready (yet)

Interactive memorial conversations aren’t for everyone, and timing is crucial.

- You feel overwhelmed by the idea of interacting with a digital version of your loved one.

- You’re in the acute phase of grief and haven’t processed the initial loss.

- You expect the AI to “replace” real memories or relationships.

- You fear exposure of sensitive or private data.

- Significant family disagreements exist about pursuing a digital memorial.

Unexpected upsides: Healing, learning, and legacy

Despite risks, many users report surprising benefits:

- Facilitates healing by allowing mourners to say what was left unsaid.

- Helps teach children about family history in a deeply engaging way.

- Fosters intergenerational dialogue—stories stay alive, not static.

- Supports collective mourning, especially in dispersed families or activist causes (#BlackLivesMatter digital memorials).

"The most profound impact was realizing how much we’d forgotten—and how much we still shared. The AI became a bridge, not a substitute." — User testimonial, Memory Studies Review, 2024

How to avoid common pitfalls

To maximize benefits and minimize harm:

- Set clear emotional boundaries before starting.

- Involve trusted friends or family in the process for support.

- Use the memorial as one tool among many—not a replacement for human connection.

- Regularly revisit consent and privacy preferences.

- Seek professional help if grief feels overwhelming or if dependency develops.

The future of remembrance: Where AI, culture, and grief collide

Emerging trends: From holograms to persistent avatars

The digital afterlife is evolving rapidly, with new trends pushing the boundaries of what’s possible—and acceptable.

- Holographic memorials: 3D projections of the deceased for immersive ceremonies.

- Persistent avatars: AI agents that “exist” across multiple platforms, including VR and AR.

- Scheduled messaging: Pre-recorded or AI-generated messages that “arrive” on birthdays, anniversaries, or other milestones.

- Collective digital rituals: Virtual gatherings for communities or activist causes, blending personal and social mourning.

- Voice cloning on demand: Ultra-realistic, emotionally nuanced recreations for personalized conversations.

Could digital memorials become the norm?

Current adoption rates suggest a tipping point may be near. In 2024, 35% of global consumers find AI-based recreation of the dead acceptable, while 38% remain opposed (MiniMe Insights, 2024). The normalization of interactive memorials depends on trust, transparency, and proven value.

| Adoption Factor | Current Status | Challenges |

|---|---|---|

| Consumer Willingness | 35% Accept, 38% Oppose | Privacy, ethics, cultural resistance |

| Tech Maturity | Rapid improvement, but imperfections | Uncanny valley, data requirements |

| Regulatory Landscape | Fragmented, few global standards | Cross-border privacy, consent gaps |

| Emotional Efficacy | Mixed—positive for some, negative for others | Risk of dependency, authenticity doubts |

Table 5: Barriers and drivers for mainstream adoption of digital memorial conversations.

Source: MiniMe Insights, 2024

Still, as tech integrates deeper into daily life, interactive memorial conversations move from taboo to trend.

What happens when the tech outlives the memory?

One lasting dilemma: digital memorials can persist long after family ties have faded, or when descendants no longer remember—or want—the legacy. Who maintains, archives, or deletes these digital ghosts?

- Unattended avatars risk becoming digital “zombies,” generating content with no audience.

- Family disputes may arise over what to keep or erase.

- Platforms may shutter, risking permanent data loss or unwanted exposure.

Beyond the hype: Contrarian views and critical debates

Is grieving with AI a coping tool or a crutch?

Critics warn that digital mourning can become an escape from the difficult work of grief. Some call it “emotional outsourcing,” replacing acceptance with algorithmic comfort.

"Digital memorials offer solace, but they can also trap us in a feedback loop of nostalgia, preventing the acceptance that healthy grief requires." — Dr. Amira Shah, Bereavement Counselor, SAGE Journals, 2024

- Some mourners report struggling to let go when digital conversations feel “too real.”

- The risk of mistaking simulation for genuine presence is highest during acute grief.

- Experts urge a balanced approach: using AI as a supplement, not a substitute, for mourning.

The risk of digital dependency

Emotional connection is a double-edged sword. While digital memorials offer comfort, they can also foster dependency—especially if users rely on bots for daily support.

Over time, this can erode resilience, increase isolation, or blur the line between memory and reality.

- Set time limits for interaction.

- Maintain real-world rituals alongside digital remembrance.

- Seek support groups or counseling if dependency emerges.

Voices from the edge: What the skeptics get right

Skeptics caution against the unchecked commodification of grief. They argue that interactive memorials should never replace real-world relationships or rituals.

"When we algorithmically reconstruct the dead, we risk reducing lives to data points and grief to a subscription service." — Prof. Naomi Kim, Digital Humanities, Memory Studies Review, 2024

| Skeptic Concern | Validity | Mitigation |

|---|---|---|

| Grief commodification | High—profit-driven models can exploit pain | Transparent pricing, community support |

| Authenticity anxiety | Moderate—AI sometimes falters | Ongoing training, user feedback |

| Privacy breaches | High—data leaks can cause harm | Advanced encryption, user-controlled consent |

Table 6: Contrarian critiques and mitigation strategies for digital memorial conversations.

Source: Original analysis based on Memory Studies Review, 2024

Expert tips: Making the most of interactive memorial conversations

Best practices for healthy engagement

To ensure a meaningful, safe experience:

- Start with a clear purpose—know why you want to engage.

- Set boundaries: frequency, duration, emotional limits.

- Involve family or a support network.

- Regularly review privacy settings and data use.

- Use digital memorials alongside, not instead of, traditional remembrance.

When to seek offline support

Interactive memorials can comfort, but they’re not a panacea.

- If you feel stuck, depressed, or avoid real-life relationships, seek professional help.

- If family conflict intensifies over the memorial, pause and mediate offline.

- Use digital tools as part of a broader healing journey, not the sole solution.

Resources for further exploration

For those ready to dig deeper:

- MiniMe Insights Digital Afterlife Report (2024)

- IEEE Spectrum: Digital Afterlife (2023)

- Psychology Today: Navigating Grief in the Digital Age (2024)

- Memory Studies Review: AI and Mourning (2024)

- Satori News: AI’s Ethical Quandary (2024)

Definition list:

Peer-led or professionally guided spaces for sharing grief and experiences—often helpful when navigating digital mourning tools.

The person chosen to manage a loved one’s digital assets, ensuring wishes are respected.

Legal or platform-provided documentation specifying wishes for posthumous digital presence.

Digital legacy and privacy: What happens to your data after death?

The new rules of digital inheritance

Digital inheritance is a legal minefield. Unlike physical assets, digital remains often fall outside traditional wills, creating confusion and conflict.

Definition list:

A document specifying how your online accounts and digital assets should be handled after death.

A person authorized to manage (or close) your digital accounts, as defined by platforms like Facebook and Apple.

Platform-specific rules that often override legal directives, dictating what happens to digital assets post-mortem.

According to MiniMe Insights, 2024, most consumers remain unaware of these rules—leaving digital legacies vulnerable to loss or misuse.

Protecting your identity—and your loved ones’ memories

Security is nonnegotiable. To safeguard digital legacies:

- Use strong, unique passwords for each account.

- Designate a digital executor in your will.

- Regularly update privacy settings on memorial platforms.

- Choose services with end-to-end encryption and transparent data use.

- Store backup copies of key digital assets offline.

Planning your own virtual legacy

Take control of your story:

- Inventory your digital assets—social profiles, emails, cloud storage, AI memorials.

- Draft a digital will, specifying your wishes for each asset.

- Appoint a trusted legacy contact or digital executor.

- Communicate your wishes clearly to family and chosen contacts.

- Regularly revisit and update your digital legacy plan.

A little planning spares your loved ones stress, confusion, and potential conflict when the time comes.

Supplements: Adjacent topics, misconceptions, and practical impacts

Common myths about interactive memorial conversations debunked

- “AI memorials are just for techies.” In reality, platforms are increasingly user-friendly, with intuitive onboarding.

- “Digital avatars are always accurate.” Without enough data, AIs can only approximate personalities.

- “Memorial bots replace human grief.” They’re a supplement—not a substitute—for therapy, rituals, or real relationships.

- “Privacy isn’t a concern.” Insecure platforms put your memories at risk of theft or misuse.

- “All families want the same thing.” Attitudes toward digital remembrance vary widely by culture, religion, and personal preference.

“No technology, however advanced, can replace the messiness or beauty of human memory—it can only hold a mirror to it.” — Dr. Ruth Miller, Digital Culture Researcher, Psychology Today, 2024

Psychological impacts: What research reveals

Recent studies show nuanced impacts:

| Impact Area | Positive Effects | Negative Effects |

|---|---|---|

| Grief Processing | Facilitates closure, reduces acute distress | May delay acceptance, risk of dependency |

| Social Support | Encourages community mourning, shared stories | Potential for online harassment or trolling |

| Legacy Preservation | Keeps stories alive across generations | Digital decay, data loss over time |

| Privacy & Security | New safeguards for sensitive data | Increased risk of hacking or misuse |

Table 7: Psychological and practical impacts of interactive memorial conversations.

Source: Original analysis based on Psychology Today, 2024, ACM CHI, 2024

Many experts recommend using platforms like theirvoice.ai as part of a comprehensive approach to memorialization—combining digital tools with traditional rituals and supportive communities.

Practical scenarios: Who benefits most—and who should avoid?

Interactive memorial conversations are most beneficial for:

- Grieving families seeking comfort and ongoing connection.

- Family historians preserving stories for future generations.

- Elderly individuals combating loneliness after loss.

- Communities engaging in collective or activist mourning.

They may NOT be suitable for:

- Individuals in acute distress or struggling with mental health challenges.

- Families with unresolved conflict about digital memorialization.

- Those lacking trust in the platform’s privacy and security protocols.

Each journey is unique—listen to your needs, stay informed, and remember: the digital afterlife is a tool, not a destination.

Conclusion

The digital afterlife isn’t a distant concept—it’s the present reality, recasting grief, legacy, and remembrance in the language of algorithms and avatars. Interactive memorial conversations are more than technological novelties—they’re a profound shift in how we cope with loss, sustain connection, and confront mortality in the twenty-first century. The journey is fraught: comfort and closure balanced against dependency and ethical dilemmas, innovation weighed against tradition and privacy. As research reveals, interactive memorials have the power to heal, but only when used with intention, transparency, and care. For those ready to navigate this brave new world, platforms like theirvoice.ai offer a bridge between memory and presence—a chance to connect, reflect, and ultimately, heal. The question isn’t whether you’d talk to a digital ghost, but what you’d hope to find on the other side of the conversation.

Sources

References cited in this article

- MiniMe Insights(minimeinsights.com)

- IEEE Spectrum(spectrum.ieee.org)

- Memory Studies Review(brill.com)

- Satori News(satorinews.com)

- Psychology Today(psychologytoday.com)

- SAGE Journals(journals.sagepub.com)

- ACM CHI(dl.acm.org)

- Business Money(business-money.com)

- British Journal of Social Psychology(bpspsychub.onlinelibrary.wiley.com)

- Pew Research(pewtrusts.org)

- Liebertpub(liebertpub.com)

- BeyondReminisce(beyondreminisce.com)

- HatchWorks(hatchworks.com)

- ScienceDaily(sciencedaily.com)

- Liebertpub(liebertpub.com)

- Death with Dignity(deathwithdignity.org)

- ResearchGate(researchgate.net)

- TechXplore(techxplore.com)

- European Law Institute(europeanlawinstitute.eu)

- Trend Micro(news.trendmicro.com)

- PubMed(pubmed.ncbi.nlm.nih.gov)

- Infonetica(infonetica.net)

- Victoria Albert Institute(victoria-albert.com.au)

- ScienceDirect(sciencedirect.com)

- ResearchGate(researchgate.net)

- Ashes to Ashes(ashestoashesinc.com)

- Wikipedia(en.wikipedia.org)

- CNET(cnet.com)

- Springer(link.springer.com)

- Heaven.org Guide(heaven.org)

- LetsReimagine(letsreimagine.org)

- Chronicle.rip(chronicle.rip)

- Cloudforce(gocloudforce.com)

- Microsoft Learn(learn.microsoft.com)

- AIWire(aiwire.net)

Ready to Reconnect?

Begin your journey of healing and remembrance with TheirVoice.ai

More Articles

Discover more topics from Digital memorial conversations

Interactive Memorial Conversations in Elderly Care or Emotional Crutch?

Interactive memorial conversations elderly care are redefining how we grieve and connect. Discover the tech, ethics, and real stories shaping the next era. Read now.

Interactive Memorial Conversations Education and the Ethics of Grief

Interactive memorial conversations education is transforming how we remember and teach. Uncover the truths, risks, and breakthroughs driving AI-powered remembrance.

Interactive Family History Lessons When Your Ancestors Talk Back

Interactive family history lessons unlock emotional connections, challenge old-school genealogy, and reveal AI’s surprising role. Discover what you’re missing—start your legacy today.

Interactive Digital Memorials and the New Psychology of Grief

Interactive digital memorials now let you converse with digital echoes of loved ones. Explore the future, risks, and emotional impact in this deep-dive guide.

Interactive Digital Legacy Creation and Who Owns Your Afterlife

Interactive digital legacy creation reshapes how we remember, mourn, and connect. Explore the bold new frontier of AI-powered digital memorials—start your journey today.

How to Use Digital Memorial Conversations Without Losing What’s Real

Unlock new forms of remembrance, connection, and healing. Discover edgy insights, real stories, and expert tips.

Digital Grief Support in 2026: What Heals and What Harms

How to support grief recovery digitally in 2026 with raw insight, real voices, and actionable strategies. Discover what no one tells you about virtual mourning.

How to Reconnect with Departed Loved Ones in the Age of AI

How to reconnect with departed loved ones—discover modern, edgy strategies for digital remembrance, AI conversations, and redefining grief. Start your journey today.

How to Reconnect Emotionally After Loss in an Age of AI Grief

In an era that worships productivity and fast-forward healing, the true cost of emotional numbness after loss is rarely discussed. You’re left to scroll

How to Preserve Memories Digitally Without Losing Them to Time or Tech

How to preserve memories digitally—discover essential truths, hidden risks, and AI-powered ways to keep your story alive. Don’t let your legacy vanish.